AutoSP Design

Automated Sequence Parallelism

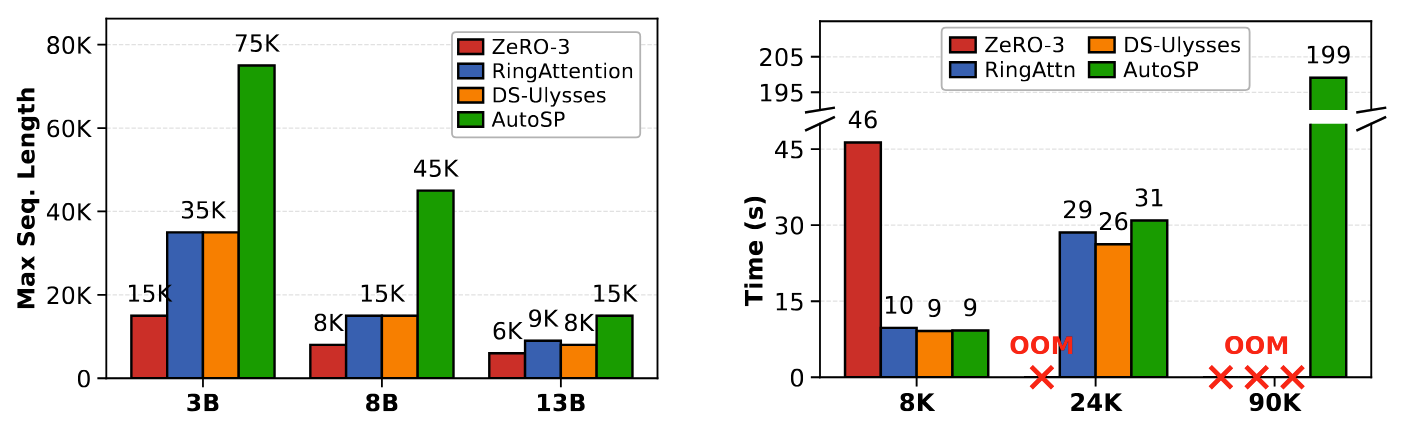

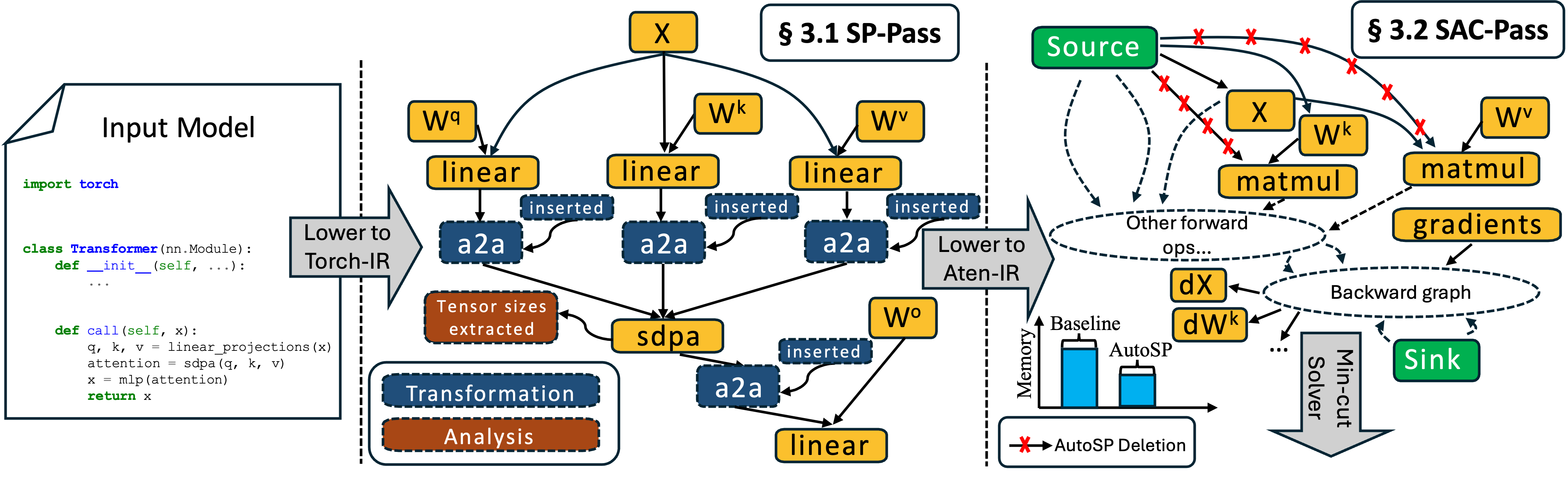

AutoSP introduces compiler passes that automatically shard input token lengths and intermediate activation buffers, insert communication collectives, and reasons about correctness for both the forwards and backwards passes. AutoSP specifically implements DeepSpeed-Ulysses style sequence-parallelism, as Ulysses' communication overhead stays constant with increasing GPU counts on NVLink topologies or fat-tree networks.

Sequence Aware Activation Checkpointing

AutoSP additionally introduces a sequence aware activation checkpointing compiler pass (SAC) that exploits unique long-context FLOP dynamics to further enable longer context training. Although Pytorch-2 introduces an automated AC max-flow min-cut formulation, we find it to be overly convservative for long-context training. SAC seeks to remedy this to make long-context training more feasible.