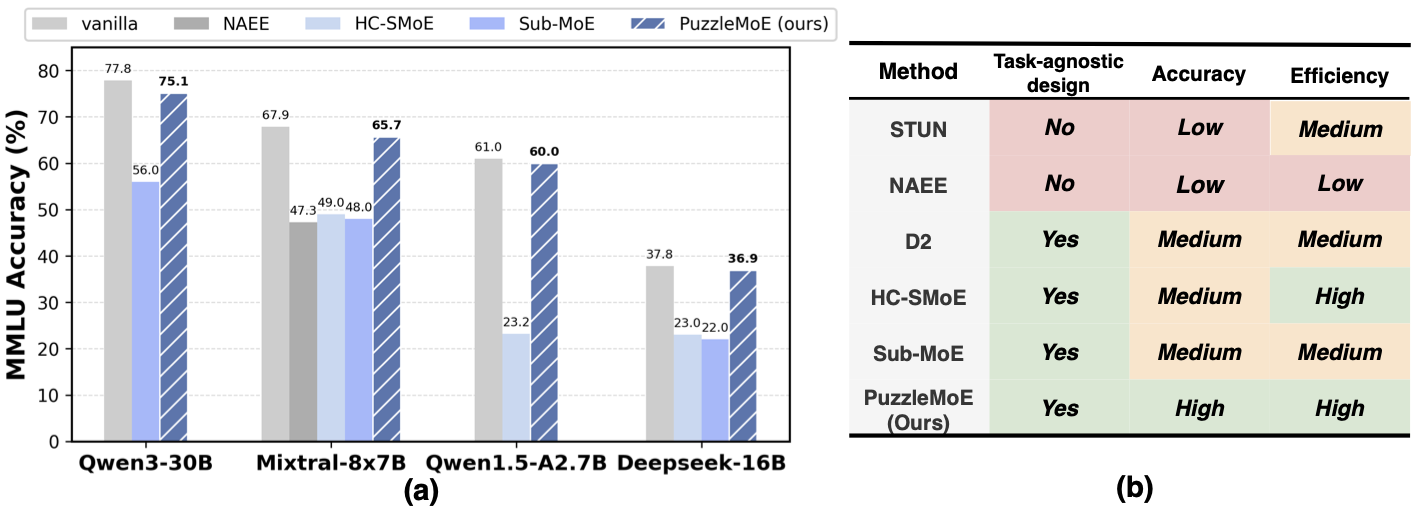

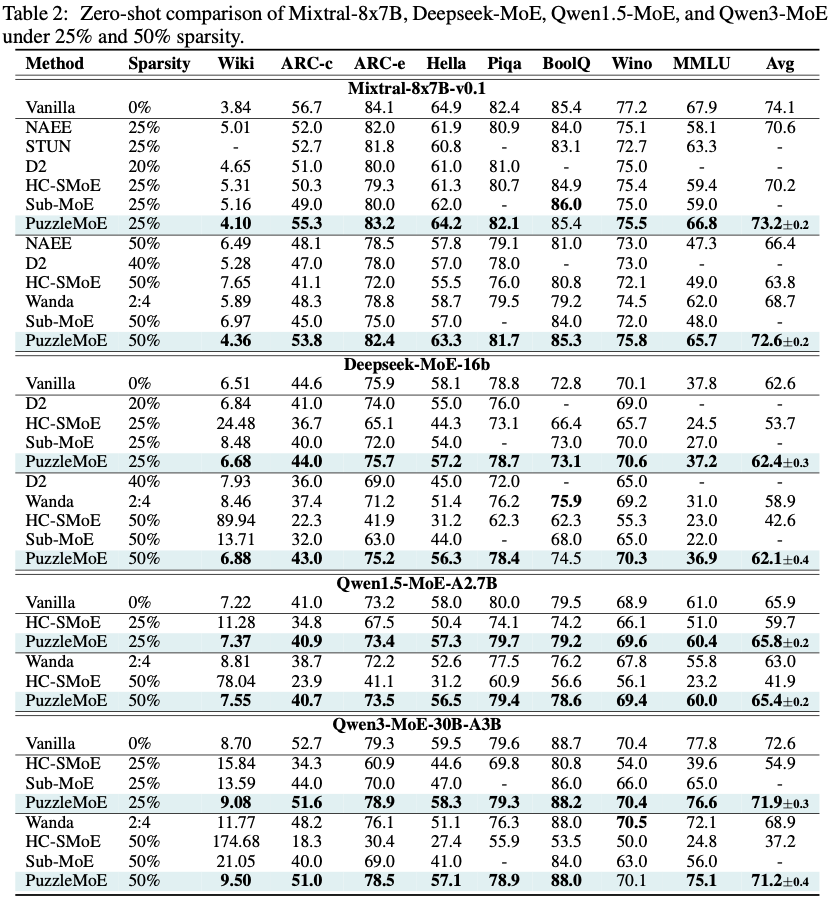

Mixture-of-Experts (MoE) models have shown strong potential in scaling language models efficiently by activating only a small subset of experts per input. However, their widespread deployment remains limited due to the high memory overhead associated with storing all expert parameters, particularly as the number of experts increases. To address this challenge, prior works have explored expert dropping and merging strategies, yet they often suffer from performance drop at high compression ratios. In this paper, we introduce PuzzleMoE, a training-free MoE compression method that achieves both high accuracy and efficient inference through two key innovations: First, PuzzleMoE performs sparse expert merging by identifying element-wise weight redundancy and specialization. It uses a dual-mask to capture both shared and expert-specific parameters. Second, to avoid the overhead of storing binary masks and signs, PuzzleMoE introduces a bit-packed encoding scheme that reuses underutilized exponent bits, enabling efficient MoE inference on GPUs. Extensive experiments demonstrate that PuzzleMoE can compress MoE models by up to 50% while maintaining accuracy across various tasks. Specifically, it outperforms prior MoE compression methods by up to 16.7% on MMLU at 50% compression ratio, and achieves up to 1.28× inference speedup.

PuzzleMoE achieves efficient fine-grained sparse expert merging through a deliberately designed pairwise dual-mask merging algorithm and a system co-design with bit-level packing technique. These two designs can effectively maintain MoE models' performance after compression while minimizing the masking overhead at the inference stage.

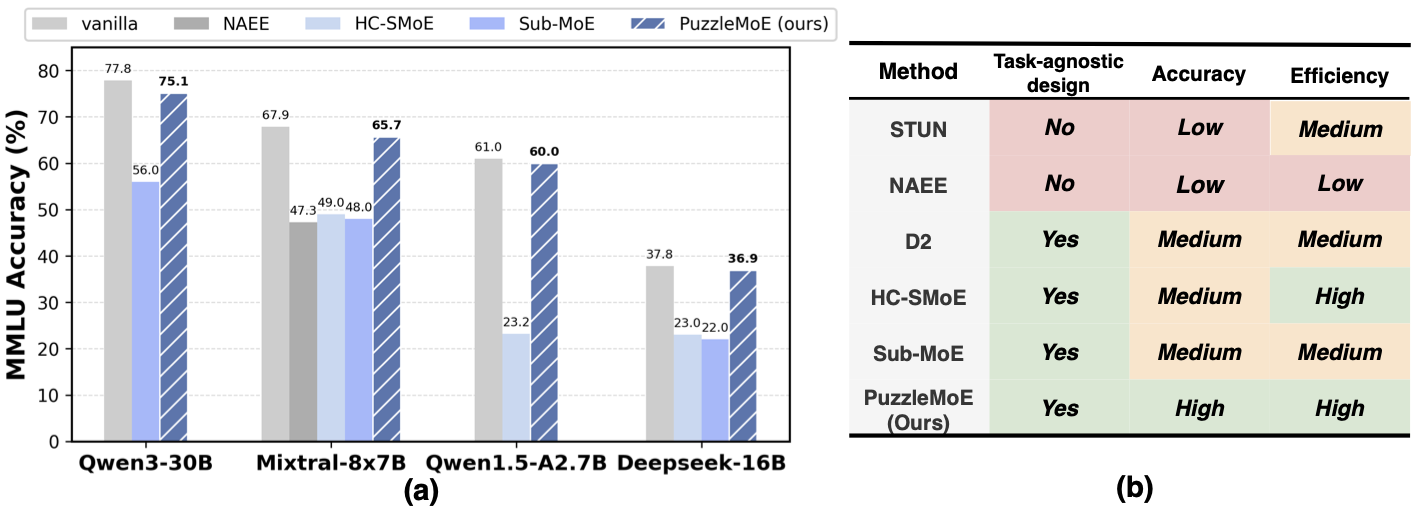

We merge a pair of experts i and j by (i) computing per-expert saliency masks \(\mathbf{M}^{\mathrm{sal}}_i\) and \(\mathbf{M}^{\mathrm{sal}}_j\) to retain high-importance weights, (ii) building a cross-expert similarity mask \(\mathbf{M}^{\mathrm{sim}}\) to keep aligned weights, and (iii) extracting sign matrices \(\mathbf{S}_i\) and \(\mathbf{S}_j\). The merged magnitude \(\mathbf{W}_{\mathrm{merged}}\) is then defined element-wise by

\[ \mathbf{W}_{\mathrm{merged}} = \mathbf{M}^{\mathrm{sim}} \odot \tfrac{|\mathbf{W}_i| + |\mathbf{W}_j|}{2} \;+\; \bigl(\mathbf{1} - \mathbf{M}^{\mathrm{sim}}\bigr) \odot \bigl(\mathbf{M}^{\mathrm{sal}}_{i}\odot|\mathbf{W}_i| + \mathbf{M}^{\mathrm{sal}}_{j}\odot|\mathbf{W}_j|\bigr), \]where \(\odot\) denotes element-wise multiplication and \(\mathbf{1}\) is the all-ones matrix of matching shape. Finally, we store \(\{\mathbf{M}^{\mathrm{sal}}_{i}, \mathbf{M}^{\mathrm{sal}}_{j}, \mathbf{S}_{i}, \mathbf{S}_{j}, \mathbf{W}_{\mathrm{merged}}\}\) for inference.

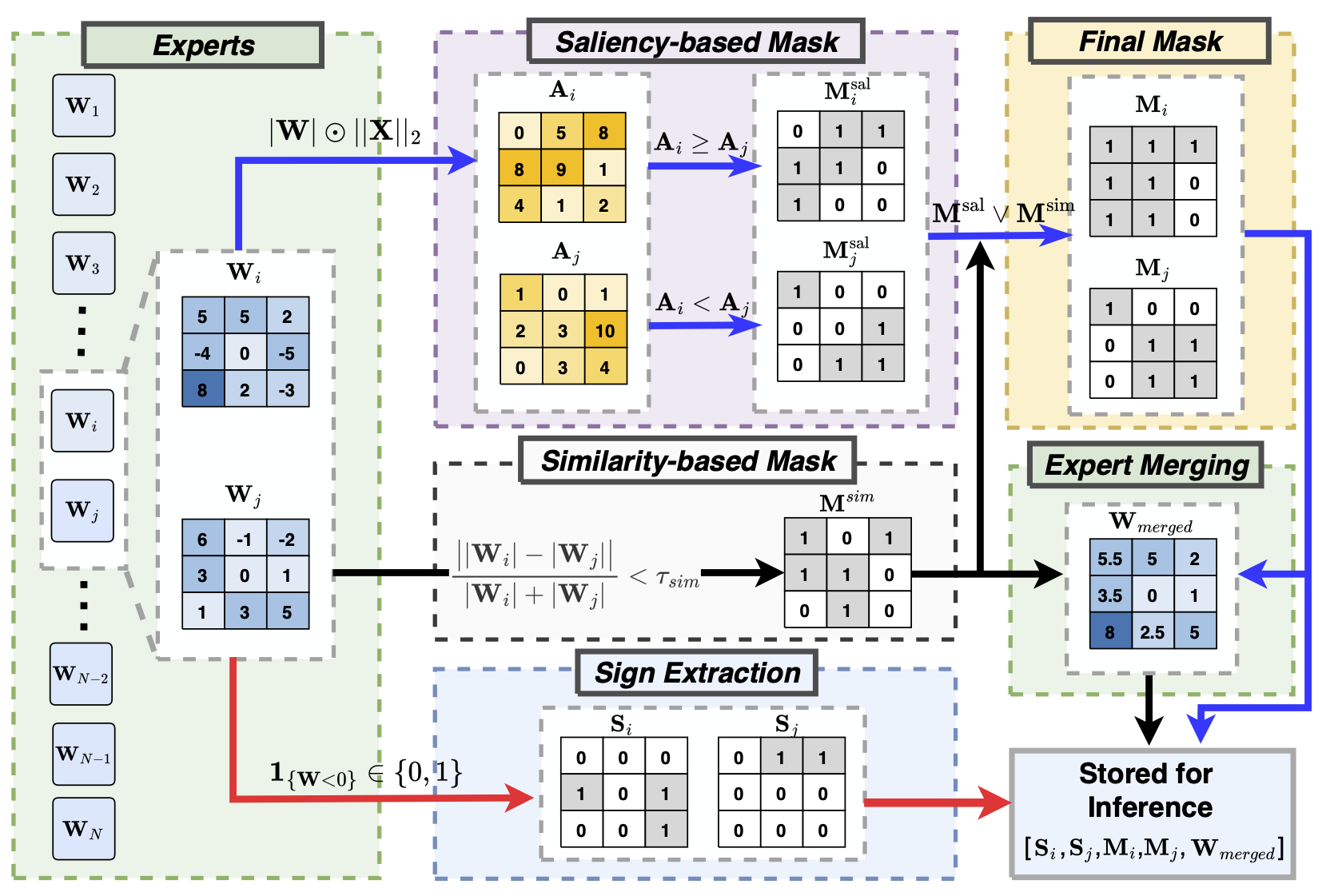

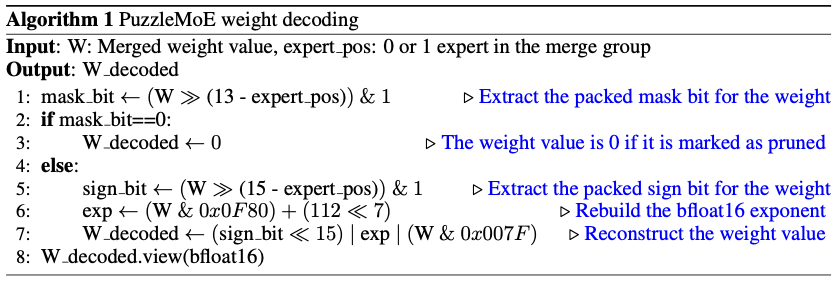

The Bfloat16 data format, which allocates 1 sign, 8 exponent, and 7 mantissa bits, is standard for LLM inference. We found that MoE LLMs underutilize the available 8-bit exponent range: expert-weight exponents concentrate in a narrow band, leaving much of the dynamic range unused. For Mixtral-8×7B (Figure 2(a)), most exponents fall between 112 and 128.

Leveraging the concentrated exponent distribution, we apply a fixed shift to map all exponents into a 5-bit range, as illustrated in Figure 3(b). Specifically, any exponent smaller than 112 is rounded up to 112, and then all exponents are shifted down by 112, resulting in values that fall within the range of 0 to 31. This shift operation frees 3 bits within the original 8-bit exponent field, which can be used to pack the binary mask bits and a sign bit. Hence, the resultant $\mathbf{W}_{merged}$ is stored in a standard Bfloat16 data format, effectively embedding the masks and signs without additional storage.

PuzzleMoE can effectively reserve MoE LLMs’ performance after aggressive expert merging, whose superior performance arises from its fine-grained sparse expert merging strategy.

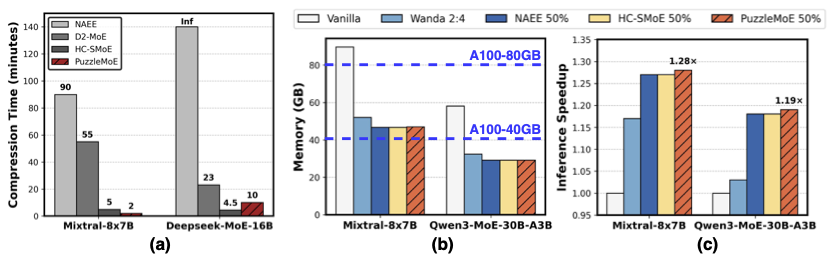

(a) PuzzleMoE only takes 2 minutes for compressing Mixtral-8x7B, while D2 takes 55 minutes due to the heavy computation cost of SVD operation. For Deepseek-MoE, PuzzleMoE takes 10 minutes for compression, indicating the efficiency of PuzzleMoE on MoE models with a large number of experts. Note that NAEE’s reliance on exhaustive search makes the compression time quite expensive. For instance, applying it to DeepSeek-MoE with 64 experts requires $10^{18}$ on the order of forward passes, rendering it infeasible in practice.

(b) and (c): For Mixtral-8x7B, the full model necessitates two A100-80G GPUs for inference, whereas the compressed model can be deployed on a single A100-80G GPU. Similarly, for Qwen3-MoE, which is evaluated on A100-40G GPUs, the full model requires two GPUs for inference, while the compressed model is capable of inference on a single GPU. Moreover, PuzzleMoE achieves an inference speedup of $1.28\times$ for Mixtral-8x7B and $1.19\times$ for Qwen3-MoE, which are marginally better than the existing compression methods and with great accuracy gains.